In Brief: December 2019

Recent publications from faculty in Chemical Engineering, Industrial Engineering and Operations Research, and Mechanical Engineering

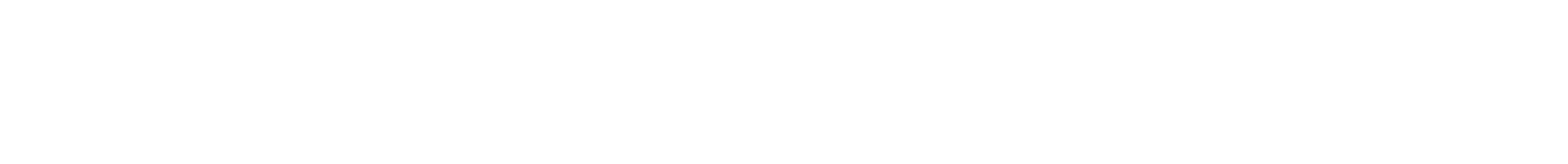

Graphic representation of a shape-shifting particle's trajectory.

Self-Driving Microrobots

Most synthetic materials, including those in battery electrodes, polymer membranes, and catalysts, degrade over time because they don’t have internal repair mechanisms. If you could distribute autonomous microrobots within these materials, then you could use the microrobots to continuously make repairs from the inside. A new study from the lab of Kyle Bishop, associate professor of chemical engineering, proposes a strategy for microscale robots that can sense symptoms of a material defect and navigate autonomously to the defect site, where corrective actions could be performed. The study was published in Physical Review Research December 2, 2019.

Swimming bacteria look for regions of high nutrient concentration by integrating chemical sensors and molecular motors, much like a self-driving car that uses information from cameras and other sensors to select an appropriate action to reach its destination. Researchers have tried to mimic these behaviors by using small particles propelled by chemical fuels or other energy inputs. While spatial variations in the environment (e.g., in the fuel concentration) can act to physically orient the particle and thereby direct its motion, this type of navigation has limitations.

“Existing self-propelled particles are more like a runaway train that’s mechanically steered by the winding rails than a self-driving car that’s autonomously guided by sensory information,” says Bishop. “We wondered if we could design microscale robots with material sensors and actuators that navigate more like bacteria.”

Bishop’s team is developing a new approach to encode the autonomous navigation of microrobots that is based on shape-shifting materials. Local features of the environment, such as temperature or pH, determine the three-dimensional shape of the particle, which in turn influences its self-propelled motion. By controlling the particle’s shape and its response to environmental changes, the researchers model how microrobots can be engineered to swim up or down stimulus gradients, even those too weak to be directly felt by the particle.

“For the first time, we show how responsive materials could be used as on-board computers for microscale robots, smaller than the thickness of a human hair, that are programmed to navigate autonomously,” says Yong Dou, a co-author of the study and a PhD student in Bishop’s lab. “Such microrobots could perform more complex tasks such as distributed sensing of material defects, autonomous delivery of therapeutic cargo, and on-demand repairs of materials, cells, or tissues.”

Bishop’s team is now setting up experiments to demonstrate in practice their theoretical navigation strategy for microrobots, using shape-shifting materials such as liquid crystal elastomers and shape memory alloys. They expect to show the experiments will prove that stimuli-responsive, shape-shifting microparticles can use engineered feedback between sensing and motion to navigate autonomously.

Agostino Capponi.

Is Bitcoin Mining Really Decentralized?

The cryptocurrency Bitcoin has always prided itself on how its decentralized payment system eliminated the need for banks to act as middlemen. As no central authority supervises the integrity of the transactions, the Bitcoin system relies on a network of nodes to verify, update, and store transactions. The incentive for nodes to undergo these tasks arises from a process called mining, in which nodes compete to solve a computationally costly problem known as proof-of-work. The winner of the mining process has the right to update the ledger, and is rewarded with newly minted Bitcoins.

Bitcoin’s founder envisioned a completely decentralized system where mining can be performed by almost anyone using off-the-shelf computers. With the rise of the bitcoin price, however, firms started to invest in the development of efficient hardware that can be used to increase their computational power, and thus their probability of successfully mining blocks. As a result, mining operations have become increasingly vertically integrated, with single firms designing mining chips, maintaining the hardware, and operating one or more data centers.

A recent study from Agostino Capponi, associate professor of industrial engineering and operations research, examines whether Bitcoin is still truly decentralized. Working with his PhD student Homoud Alsabah, Capponi developed a model to address this question. They discovered that the development of a more efficient mining hardware will not allow firms to attain higher profits.

“Instead, an arms race ensues and all firms are worse off,” Capponi notes. Paradoxically, his study shows that the proof-of-work protocol has the unintended consequence of driving the mining industry towards centralization, a severe problem that goes against the fundamental reason behind the introduction of cryptocurrency: having a decentralized payment system. The findings suggest the need for fundamental changes to the Bitcoin mining protocol to support a long-lasting decentralized payment system.

“If our predictions are correct, then Bitcoin will not be able to sustain its dominant position among cryptocurrency,” Capponi adds. “Developers of alternative blockchain protocols need to avoid these pitfalls of the Bitcoin’s proof-of-work protocol in order to be successful.”

The paper has received two awards: the INFORMS Finance Student Paper Competition and a best doctoral paper award at the second Toronto FinTech Conference earlier this year.

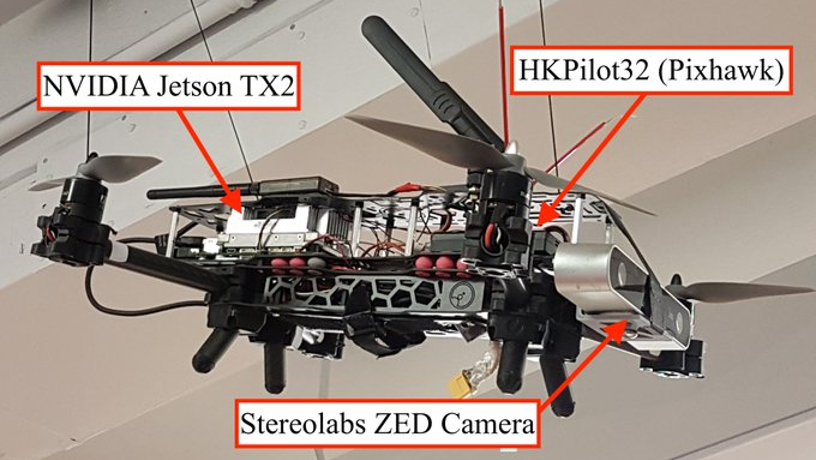

Autonomous hunting drone that can operate in GPS-denied environments thanks to its deep-learning-based approach.

Columbia Researchers Take on the Fight Against Rogue Drones

As drones become more and more popular, drone related incidents are increasing. By 2020, according to the FAA Aerospace Forecast, the number of deployed consumer UAVs (unmanned aerial vehicles) in the US will rise to 3.55 million. Accidents are already happening. In January 2015, a drone “driven” by a drunken US government worker crashed onto the lawn of the White House; in December 2018, a rogue drone shut down Gatwick Airport, leaving more than 100,000 people temporarily stranded. In Syria, ISIS has strapped grenades to UAVs to attack military bases; in Mexico, cartels use them to fight the police.

“Most people, including police officers, security guards, and military personnel, are not expert in flying a drone, and we cannot expect them to compete with a skilled attacker or an AI-powered drone pilot,” says Philippe Wyder, a PhD student in Mechanical Engineering Professor Hod Lipson’s lab and lead author of a study published November 18 in PLOS ONE. “The threats posed by fully autonomous AI-piloted drones are only going to get worse—it’s clear that the future of drone hunting has to be autonomous.”

Wyder and his team propose an autonomous hunting drone—a self-contained UAV defense system—that can chase and neutralize another drone in an environment where GPS is not available (forests, tunnels, tall buildings, etc.), while performing all computations on board. The team designed a prototype that takes off at the push of a button and then searches for, detects, and pursues its target without any further instruction.

The researchers applied a deep-learning-based approach, which they developed without a motion-capturing system and introduced a baseline approach for autonomous drone hunting in a GPS-denied environment. The drone runs a Tiny YOLO deep neural network for target detection, and uses visual inertial odometry to localize itself in its environment. The detection algorithm was tuned to run with 77% accuracy at ~8 frames per second, allowing the “hunter” to chase a target drone at 1.5 m/s in an enclosed space. While the detection speed is bottlenecked by the limited computing power, Wyder expects advanced computer modules to improve the frame rates and response times of these systems.

“Drone hunting is a complex robotics challenge that so far has only been addressed by a few commercial companies—it’s been entirely neglected by academia,” Wyder adds. “But academic drone research is a critical part of the solution. Many of the technologies involved in building a ‘drones for good’ platform translate directly to many other fields, such as search and rescue, human-robot interaction, and robot-robot interaction.”

The team has created a baseline for further development in drone hunting by the research community. They have shared their findings online, together with the code and data needed to reproduce the findings. Improving the tracking speed and accuracy, inferring the location of the target when occluded, and adding a tracking algorithm that can discriminate between multiple possible targets are future design challenges.

“We hope that making our research openly available will enable other researchers to use the work as a baseline, and train their own neural networks to detect and or track drones,” Wyder says.